Robots Exclusion Checker

By Sam Gipson

No Rating Available.

Download and install the Robots Exclusion Checker extension 1.1.5 for Microsoft edge store. Also, Robots Exclusion Checker addons is very helpful for every computer and mobile Users.

Robots Exclusion Checker extension for Edge

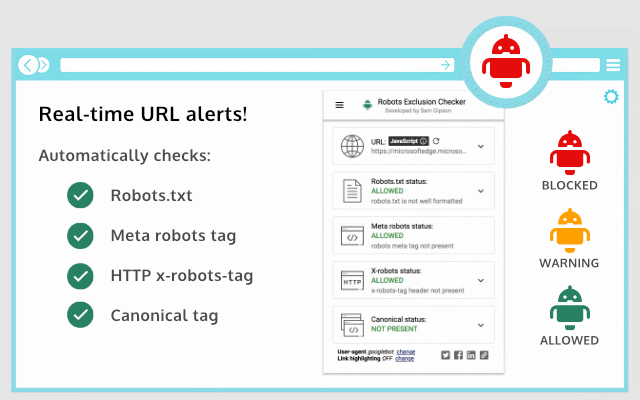

Recently Microsoft Edge is the most popular browser in the world. Also, Robots Exclusion Checker Extension For Microsoft Edge you can help quality browser using. Day by Day, increase user Microsoft Edge. Robots Exclusion Checker is designed to visually indicate whether any robots exclusions are preventing your page from being crawled or indexed by Search Engines. This guide will help you to download Robots Exclusion Checker extension 1.1.5 on their website. Anyway, Robots Exclusion Checker is developed by Sam Gipson. First, you must download their Edge browser then this guide will help to install on their browser through the extension.

In the event that you have wanted to download an extension onto the latest version of Microsoft Edge, there is a simple and straightforward course of action. The following steps will enable you to download and install any extensions that you might want to use.

Table of Contents

Download Robots Exclusion Checker extension for Microsoft Edge

Edgeaddons.com is the most popular Microsoft edge browser an extension free download alternative website. On this website when you can download Extensions no need for any Registration. I think you have a better solution to this website. Robots Exclusion Checker is the Developer Tools category extension in the Microsoft Edge web store.

Anyway, You can understand that how to download the free Robots Exclusion Checker extension 1.1.5 simply by visiting our website. There are no special technical skills required to save the files on your computer. So what are you waiting for? Go ahead!

Robots Exclusion Checker extension Features

## The extension reports on 5 elements:

1. Robots.txt

2. Meta Robots tag

3. X-robots-tag

4. Rel=Canonical

5. UGC, Sponsored and Nofollow attribute values *NEW*

– Robots.txt

If a URL you are visiting is being affected by an “Allow” or “Disallow” within robots.txt, the extension will show you the specific rule within the extension, making it easy to copy or visit the live robots.txt. You will also be shown the full robots.txt with the specific rule highlighted (if applicable). Cool eh!

– Meta Robots Tag

Any Robots Meta tags that direct robots to “index”, “index”, “follow” or “no follow” will flag the appropriate Red, Amber, or Green icons. Directives that won’t affect Search Engine indexation, such as “nosnippet” or “noodp” will be shown but won’t be factored into the alerts. The extension makes it easy to view all directives, along with showing you any HTML meta robots tags in full that appear in the source code.

– X-robots-tag

Spotting any robots directives in the HTTP header has been a bit of a pain in the past but no longer with this extension. Any specific exclusions will be made very visible, as well as the full HTTP Header – with the specific exclusions highlighted too!

– Canonical Tags

Although the canonical tag doesn’t directly impact indexation, it can still impact how your URLs behave within SERPS (Search Engine Results Pages). If the page you are viewing is Allowed to bots but a Canonical mismatch has been detected (the current URL is different from the Canonical URL) then the extension will flag an Amber icon. Canonical information is collected on every page from within the HTML <head> and HTTP header response.

– UGC, Sponsored, and Nofollow

A new addition to the extension gives you the option to highlight any visible links that use a “no follow”, “UGC” or “sponsored” rel attribute value. You can control which links are highlighted and set your preferred color for each. I’d you’d prefer this is disabled, you can switch off entirely.

## User-agents

Within settings, you can choose one of the following user-agents to simulate what each Search Engine has access to:

1. Googlebot

2. Googlebot news

3. Bing

4. Yahoo

## Benefits

This tool will be useful for anyone working in Search Engine Optimisation (SEO) or digital marketing, as it gives a clear visual indication if the page is being blocked by robots.txt (many existing extensions don’t flag this). Crawl or indexation issues have a direct bearing on how well your website performs in organic results, so this extension should be part of your SEO developer toolkit. An alternative to some of the common robots.txt testers available online.

This extension is useful for:

– Faceted navigation review and optimization (useful to see the robot control behind complex / stacked facets)

– Detecting crawl or indexation issues

– General SEO review and auditing within your browser

## Avoid the need for multiple SEO Extensions

Within the realm of robots and indexation, there is no better extension available. In fact, by installing Robots Exclusion Checker you will avoid having to run multiple extensions that will slow down its functionality.

CHANGELOG:

1.0.2: Fixed a bug preventing meta robots from updating after a URL update.

1.0.3: Various bug fixes, including better handling of URLs with encoded characters. Robots.txt expansion feature to allow the viewing of extra-long rules. Now JavaScript history.pushState() compatible.

1.0.4: Various upgrades. Canonical tag detection added (HTML and HTTP Header) with Amber icon alerts. Robots.txt is now shown in full, with the appropriate rule highlighted. X-robots-tag is now highlighted within full HTTP header information. Various UX improvements, such as “Copy to Clipboard” and “View Source” links. Social share icons added.

1.0.5: Forces a background HTTP header called when the extension detects a URL change but no new HTTP header info – mainly for sites heavily dependant on JavaScript.

1.0.6: Fixed an issue with the hash part of the URL when doing a canonical check.

1.0.7: Forces a background body response call in addition to HTTP headers, to ensure a non-cached view of the URL for JavaScript-heavy sites.

1.0.8: Fixed an error that occurred when multiple references to the same user-agent were detected within the robots.txt file

1.0.9: Fixed an issue with the canonical mismatch alert

1.1.0: Various UI updates, including a JavaScript alert when the extension detects a URL change with no new HTTP request

1.1.1: Added additional logic Meta robots user-agent rule conflicts

1.1.2: Added a German language UI

1.1.3: Added UGC, Sponsored and Nofollow link highlighting

1.1.4: Switched off no follow link highlighting by default on new installs and fixed a bug related to HTTP header canonical mismatches

1.1.5: Bug fixes to improve robots.txt parser

Found a bug or want to make a suggestion? Please email: extensions@samgipson.com

How do I install the Robots Exclusion Checker extension?

First, open up your browser and click on the three lines at the top left of your screen. Next, select “More tools” then “extensions” then “get extensions” then choose an extension to use. Press “Add.” At this point, wait a few minutes. Finally, the Robots Exclusion Checker extension has been installed.

How do I uninstall the Robots Exclusion Checker extension?

To uninstall an extension, open up your browser, click on the three lines at the top left of your screen, select “more tools,”

then you can see your install extension. Now select and click uninstall button for your install extension. wait a few times, successfully remove your Robots Exclusion Checker extension.

In conclusion, the process for modifying our browser’s behavior to add the word count feature is unbelievably simple. In this regard, an extension not only solves a problem that we have but also adds a greater degree of functionality to the experience of using an Edge browser. If you have any problem with Robots Exclusion Checker Add-ons install feel free to comment below next replay to answer this question.

Technical Information

| Version: | 1.1.5 |

|---|---|

| File size: | 157kb |

| Language: | English (United States) |

| Copyright: | Sam Gipson |